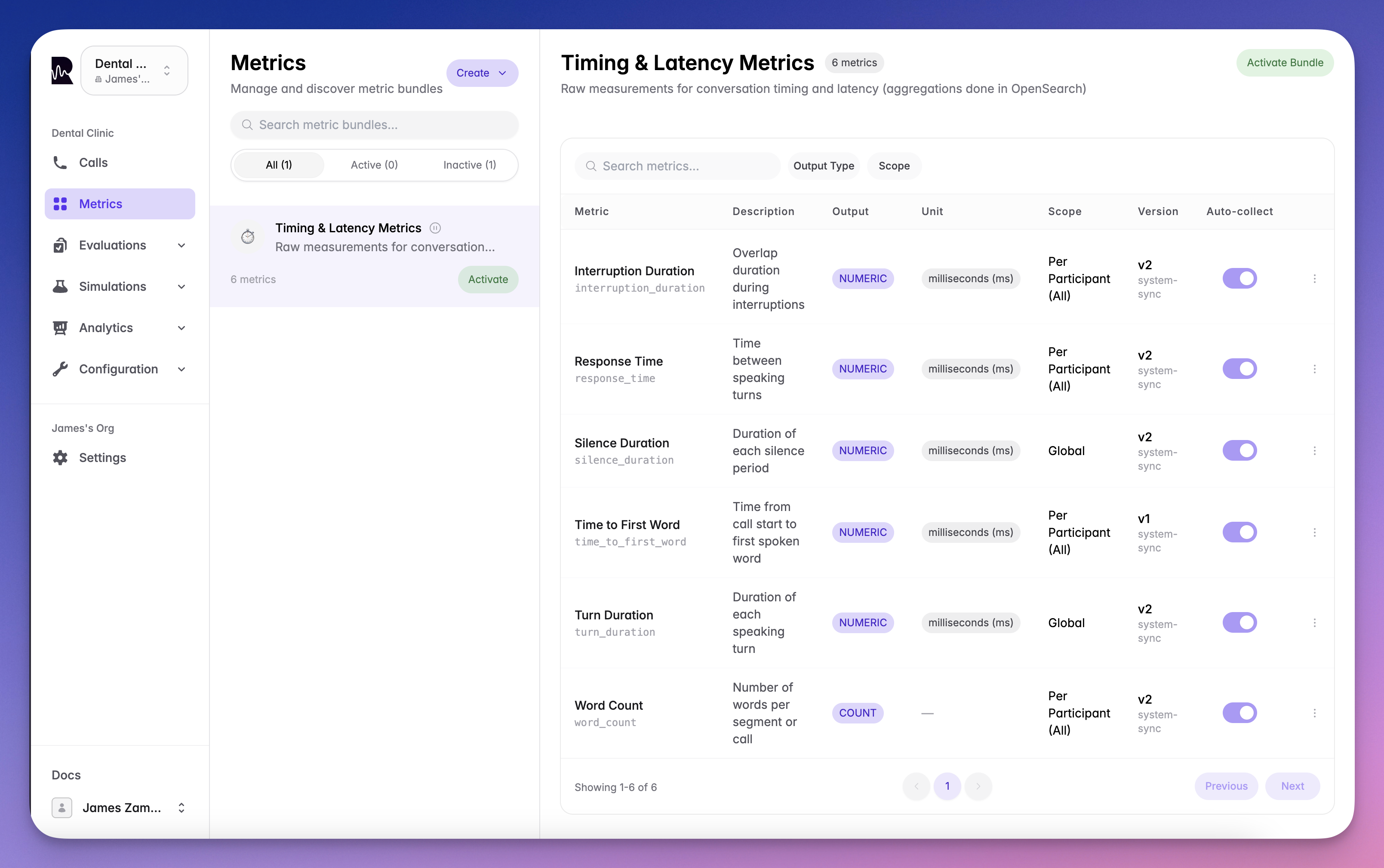

Metric definitions describe what to measure and how. Roark comes with built-in system metrics that work out of the box, and you can create your own custom metrics tailored to your business needs.Documentation Index

Fetch the complete documentation index at: https://docs.roark.ai/llms.txt

Use this file to discover all available pages before exploring further.

Creating Custom Metrics

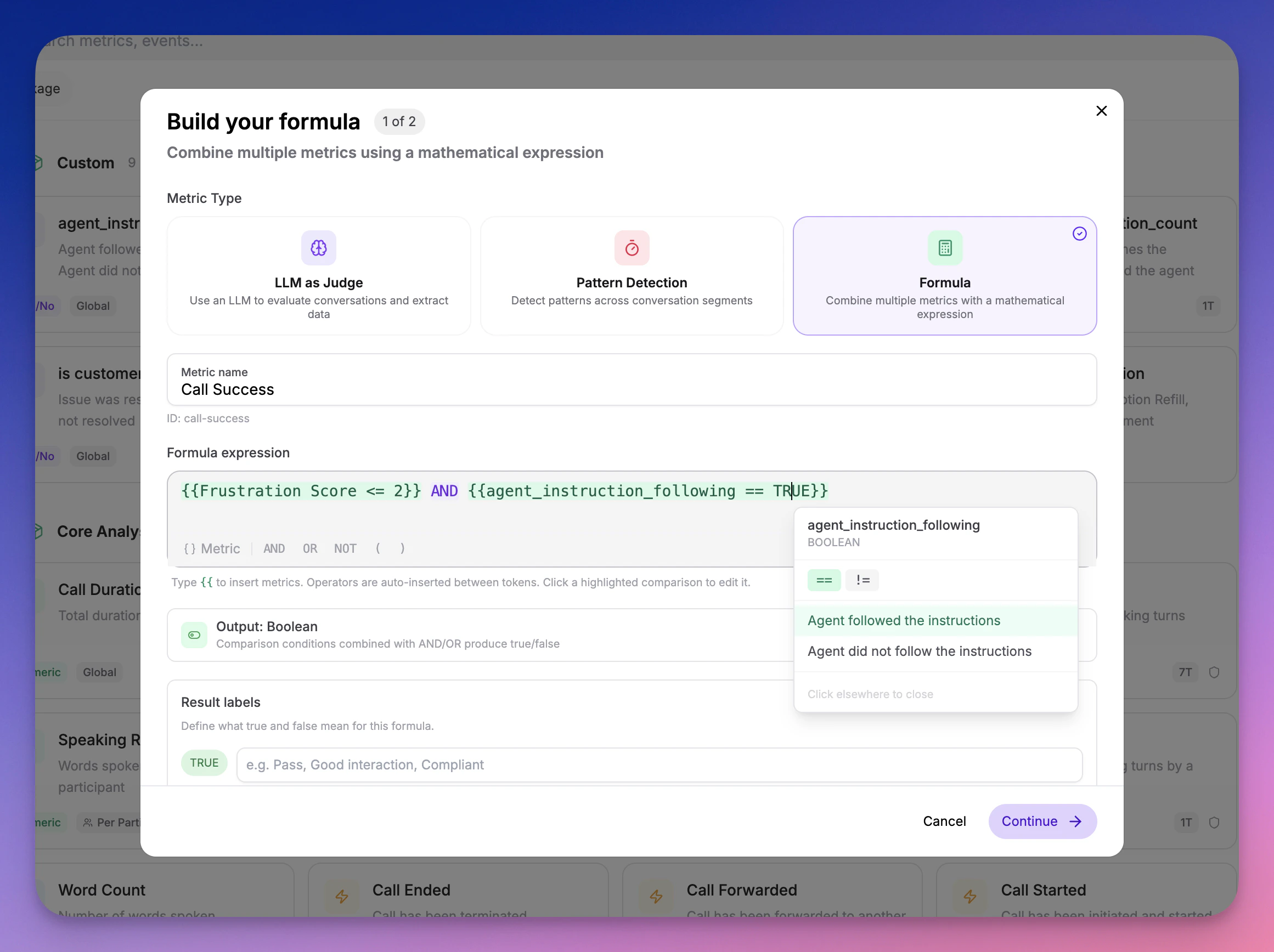

Custom metrics let you measure anything specific to your use case — task completion, compliance checks, quality scoring, or business KPIs.Custom Metric Types

- LLM as Judge

- Pattern Detection

- Formula

Write a natural-language prompt describing what to measure. Roark Prism — our evaluation model optimized for voice AI — scores each call against your prompt and returns a typed result.Best for subjective assessments, business logic, and anything that requires understanding conversational context.

Configuration Steps

Define the Metric

Name your metric, choose an output type, and describe what it measures. For LLM as Judge metrics, write the evaluation prompt. For formulas, build the expression from existing metrics.

Test in the Playground

Run your metric against a real call in the Playground to validate it produces the results you expect. Iterate until you’re satisfied.

Add to a Policy or Run Plan

Attach the metric to a metric policy for automated collection on incoming calls, or to a simulation run plan for testing.

SDK Reference

All API endpoints require authentication. Generate an API key to get started.

Create a Metric Definition

Create a new custom metric definition using the SDK: Parameters:| Field | Type | Required | Description |

|---|---|---|---|

name | string | Yes | Name of the metric (1-100 characters) |

outputType | string | Yes | One of: BOOLEAN, NUMERIC, TEXT, SCALE, CLASSIFICATION, COUNT, OFFSET |

analysisPackageId | string | Yes | UUID of the analysis package to add this metric to |

metricId | string | No | Unique identifier (auto-generated from name if omitted) |

scope | string | No | GLOBAL (default) or PER_PARTICIPANT |

participantRole | string | Conditional | Required when scope is PER_PARTICIPANT |

supportedContexts | string[] | No | Defaults to ["CALL"] |

llmPrompt | string | No | The LLM prompt used to evaluate this metric (max 2000 chars) |

| Field | Applies To | Description |

|---|---|---|

booleanTrueLabel / booleanFalseLabel | BOOLEAN | Custom labels for true/false values |

scaleMin / scaleMax | SCALE | Range boundaries (0-100) |

scaleLabels | SCALE | Array of label objects with rangeMin, rangeMax, label, displayOrder |

classificationOptions | CLASSIFICATION | Array of options with label, description, displayOrder |

maxClassifications | CLASSIFICATION | Maximum number of classifications to select |

List Metric Definitions

Retrieve all available metric definitions for your project:Get Call Metrics

Retrieve all metrics for a specific call:Understanding Metric Values

Confidence Scores:- All metrics include a

confidencefield (0-1) - Deterministic metrics (like word count, duration) have confidence = 1.0

- AI-powered metrics include the model’s confidence level

- For AI-computed metrics, the

valueReasoningfield provides explanation - Useful for understanding why a metric was scored a certain way

- Example: “The agent verified identity using two-factor authentication as mentioned in segment 3”

- When

contextisSEGMENT, thesegmentfield contains the specific utterance - When

contextisSEGMENT_RANGE, bothfromSegmentandtoSegmentare included - All segment objects include the full text and timing information

Best Practices

Start with System Metrics

Start with System Metrics

Use the built-in system metrics first — they cover performance, sentiment, interruptions, compliance, and more with no setup. Add custom metrics for business-specific needs.

Test Before Deploying

Test Before Deploying

Always validate custom metrics in the Playground on representative calls before attaching them to policies.

Use Formulas for Composite Scores

Use Formulas for Composite Scores

Instead of creating one complex LLM prompt that tries to measure everything, break it into focused metrics and combine them with a formula.

Balance Coverage

Balance Coverage

Track both agent and customer metrics for complete conversation understanding.

What’s Next

System Metrics Reference

Browse all 65+ built-in metrics powered by specialized models

Playground

Test metrics interactively before deploying

Thresholds

Define pass/fail criteria for your metrics

Policies

Automate metric collection with conditions-based rules