Metrics are how you measure what happens in every voice AI conversation. Roark collects some metrics automatically — like response time, talk time, and sentiment — and lets you define your own using LLM prompts for things like compliance checks, task completion, or custom business KPIs.Documentation Index

Fetch the complete documentation index at: https://docs.roark.ai/llms.txt

Use this file to discover all available pages before exploring further.

What’s a Metric?

A metric is a single measurement collected from a call. It has:- An output type — boolean, numeric, scale, text, classification, count, or offset

- A scope — global (one value per call) or per-participant (separate values for agent and customer)

- A context — call-level, segment-level (single utterance), or segment-range (span of conversation)

response_time is a numeric, per-participant, segment-range metric that measures how long each speaker takes to respond. identity_verified might be a boolean, global, call-level metric powered by an LLM prompt.

Types of Metrics

System Metrics (Built-in)

Roark automatically collects deterministic and voice-analysis metrics for every call — no configuration needed. Voice-analysis metrics are powered by Roark’s custom voice analysis models, purpose-built to extract signal from conversational audio:- Performance — Response time, talk time, silence duration, overlap/interruptions, latency

- Emotion & Sentiment — Sentiment tracking, 64+ emotion detection, vocal cues (raised voice, frustration), stress indicators

- Speech — Interruption detection, pause analysis, repetition detection

- Compliance — Disclosure completeness, prohibited language, PII handling, prompt injection resistance

- Call Quality — Speech quality scoring (DNSMOS), accent detection, voicemail handling

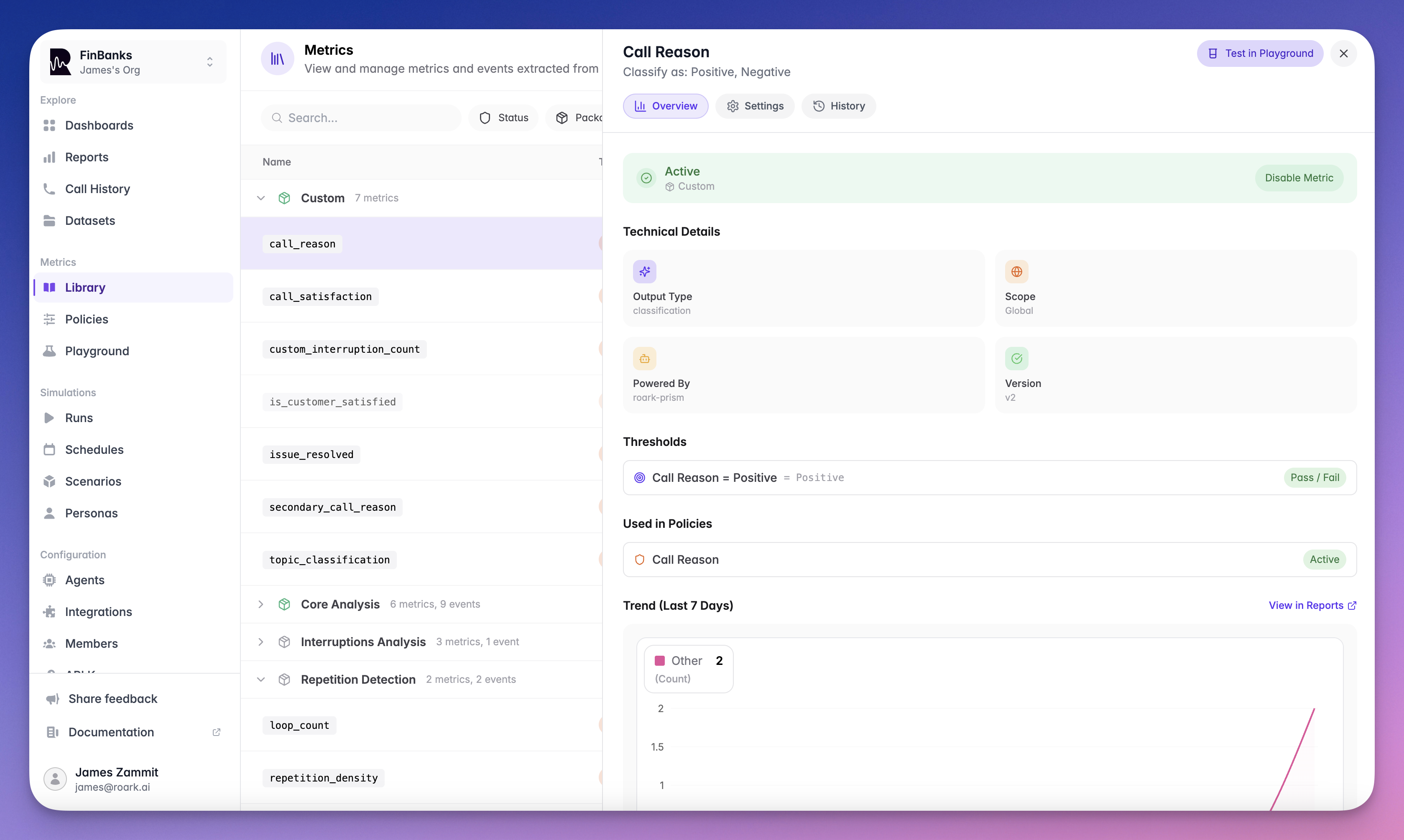

Custom Metrics (LLM-Powered)

Define your own metrics using natural language prompts. Custom metrics are evaluated by Roark Prism — our purpose-built evaluation model optimized for scoring voice AI conversations. You write a prompt describing what to measure, and Prism evaluates each call against it to return a typed result:- “Did the agent verify the caller’s identity?” → Boolean

- “Rate the agent’s empathy on a scale of 1-10” → Scale

- “What was the primary reason for the call?” → Classification

- “How many times did the agent attempt to upsell?” → Count

How It All Fits Together

Here’s the typical sequence from defining a metric to seeing results:Define or Choose a Metric

Use a built-in system metric, or create your own custom metric with an LLM prompt, pattern, or formula.

Test in the Playground

Run your metric against a real call in the Playground to validate it produces the results you expect. Iterate on the prompt until you’re satisfied.

Add Thresholds (Optional)

Set pass/fail criteria on your metric — for example,

Customer Satisfaction >= 7 or Response Time < 1000ms. Thresholds turn raw values into actionable outcomes.Decide When to Collect

Choose how and when your metric runs:

- Metric Policies — Automate collection on incoming calls (monitoring). Set conditions to target specific agents, sources, or call properties.

- Simulation Run Plans — Attach metrics with thresholds to simulation tests to validate agent behavior before deployment.

- Collection Jobs — Run metrics on demand against existing calls via the SDK, useful for backfilling or re-processing.

Analyze Results

View metric values per call in Call History, aggregate them in Reports, and organize everything in Dashboards.

Quick Start Examples

- Use a System Metric

- Create a Custom Metric

- Use Metrics in Simulations

System metrics like Calls where the agent’s P95 response time exceeds 1 second are automatically flagged as failures.

response_time are already collected for every call. To set a quality bar, create a metric policy with a threshold:Sections

System Metrics Reference

Browse all 65+ built-in metrics powered by specialized models

Custom Metrics

Create custom metrics with LLM prompts, patterns, and formulas

Playground

Test metrics interactively against real calls

Metric Policies

Automate metric collection with conditions-based rules

Thresholds

Define pass/fail criteria for your metrics

Collection Jobs

Run metrics on demand for existing calls via the SDK